He spoke not a word, but went straight to his work,

And started all the builds; then turned with a jerk,

And laying his finger aside of his nose,

And giving a nod, up the A/C duct rose;

He sprang to his sleigh, to his team gave a whistle,

And away they all flew like the down of a thistle.

But I heard him exclaim, ere he drove out of sight—

“Happy Christmas to all, and to all a good night!”

- A Visit from St. Nicholas, Clement Clarke Moore (1837)

This is the second part in my Dev Santa Claus series: you can read part 1 here. In this part I’ll talk about my experience over the holidays setting up code coverage metrics for a C++ codebase built using Bamboo, CMake and GCC.

Coverage in C++

When starting out with this project I had no idea how to generate code coverage metrics for a C++ codebase. Searches revealed several tools for extracting coverage information. OpenCppCoverage looks promising for Visual C++ on Windows. For C++ code compiled with GCC there’s gcov. For Clang there’s a gcov-compatible tool called llvm-cov. Without going into much depth in my analysis of each tool I selected gcov for generating coverage on our unit tests. I chose gcov primarily because our products are starting to standardize around GCC on Linux, and because it seemed to be the most commonly used tool in the open source world.

Generating coverage with gcov

gcov is both a standalone tool and part of the GCC compiler itself. The compiler adds instrumentation to binaries produced when the --coverage flag (a synonym for -fprofile-arcs -ftest-coverage -lgcov) is passed to it and produces .gcno files which provide information about the instrumentation. When the instrumented code is run, .gcda files are produced which contain information about how many times each line of code has been executed. These are binary files with a format that is neither publicly documented nor stable, so it is best not to manipulate them directly. Instead, the gcov tool can be used to convert the .gcda files to .gcov files. .gcov files are text-based, and their format is both stable and well-known. Each .gcov file is essentially an annotated code listing marked up with hit counters and other information for each line.

Given the source file:

|

|

If we compile the source file with the --coverage flag and run the resulting executable we get the .gcno and .gcda files with the coverage data

|

|

Then, we can then use gcov to generate a .gcov file which can be read by humans or processed by other programs.

|

|

main.cpp.gcov looks like this:

|

|

As part of GCC, gcov knows which lines of code are executable. Unexecutable lines are marked with -. Executed lines are marked with a count corresponding to the number of times each line was executed. Lines that are executable but weren’t run are marked with #####. Because these files are text-based, they are useful enough on their own for quick analyses. For more user-friendly results there are other tools which process these .gcov files such as lcov and gcovr (these will be covered in more detail later).

You may have noticed that while generating this file, gcov also generated a file called iostream.gcov. By default gcov will generate corresponding coverage files for each input file passed to it on the command line, plus it will generate coverage files for all included files. For most applications this information is useless, and may affect the accuracy of the overall coverage numbers.

To exclude files outside the working tree (such as system headers), gcov provides a -r or --relative-only command-line option which will ignore includes which are specified by an absolute path (including the system search paths). Alternatively, you can preserve only the .gcov files for the specific source files you are interested in, and delete all others. This may be a necessary step if these files are being fed into another tool for further processing (e.g. gcovr).

|

|

gcov and CMake

Another feature of gcov is that it has certain expectations around where to find files. For an input file main.cpp, gcov will expect to find corresponding main.gcda and main.gcno files in the same directory as the source file. The filename the compiler assigns these files is based on the output file name you specify with the -o flag when calling GCC.

This becomes particularly apparent when appending the --coverage flag to a target in CMake. CMake has its own ideas about how to name files, and so for an input file main.cpp, it will create an output file main.cpp.o and main.cpp.gcda. When the code is run, it will generate an output file main.cpp.gcno. When gcov is invoked for main.cpp it will look for main.gcda and main.gcno. Luckily gcov also seems to tolerate having object names fed to it instead of source file names, and will still just strip the last extension from the input filename to find the corresponding .gcda and .gcno files.

|

|

If your CMake build is also out-of-tree (as most CMake builds are) then your .o, .gcda and .gcno files are all in the build tree, while the .cpp files are in the separate source tree. So when you call gcov on your source files, it will complain that it cannot find the corresponding object files! Fortunately there is a gcov flag to remedy this, too. The -o or --object-directory flag can take a path to the .gcda and .gcno object files so gcov knows where to look.

|

|

Separate build/run paths

Another difficulty with gcov arises when the tests are executed in a different location to where they are built. When tests are compiled with the --coverage flag, the absolute path (relative to the root directory /) where the .gcno file should be created is compiled into the test executable. When you attempt to run the same executable on a different machine or new directory on the same machine, the executable will (try to) create the .gcno files at the path the executable was compiled under. This can be problematic if the build directory has been removed or doesn’t exist on the current host machine.

This problem manifests in our build in two possible ways:

- Tests are run on an embedded target after being cross-compiled on a build machine.

- Unit tests are built in one build job and run in another, and these jobs may run on different build machines.

The first case was ruled out for this feature because we don’t currently run the unit tests on our target hardware. The second one had a much greater impact. Not only could the build execute on a different machine, but because Bamboo names its build working directories according to properties specific to the current build, even if the unit tests did happen to run on the same machine they would still use a different working directory than the build. Worse, without knowing the working directory or related properties of the unit test build job, there’s no way to know where the .gcno files will be deposited when the tests are run.

The easy solution was to side-step this problem by merging the two phases of the unit testing process (build, run) into a single build job. But being unsatisfied with this solution and conscious that this could make things difficult when we run our unit tests on embedded targets later, I checked to see what gcov‘s solution was.

gcov does address this issue with a feature targeted squarely at cross-compilation builds. The GCOV_PREFIX and GCOV_PREFIX_STRIP environment variables can be used to redirect the .gcno files for an executable compiled with --coverage to a location of your choosing. GCOV_PREFIX allows you to specify a directory to append to the beginning of the output path, while GCOV_PREFIX_STRIP allows you to delete directories from the beginning of the output path.

If GCOV_PREFIX_STRIP is used without GCOV_PREFIX it will make the output paths relative to the current working directory, allowing you to redirect the build root to the current directory while preserving the build tree structure. I also discovered that if you assign a sufficiently large number to GCOV_PREFIX_STRIP (e.g. 999), it will strip away the entire build tree and deposit all .gcno files in the current working directory. However, this wouldn’t work for our build process because the source tree is split into folders by module, and in order for hierarchical coverage results to be generated (more on this later) the .gcno files need to be in the correct directory for the module to which they relate.

In my case I chose to just use GCOV_PREFIX to redirect the output of our unit tests to a temporary directory, then used some ** globbing and sed to copy each .gcno output file into the correct place in the working directory tree alongside the .o and .gcda files for each module subdirectory.

|

|

lcov and gcovr

The information produced by gcov is useful, but very basic and hard to navigate. For a more interactive experience there are tools that use the information produced by gcov to build richer and more interactive reports. The most prominent of these tools are gcovr and lcov. Both can generate HTML reports which break down coverage by lines; lcov also adds metrics for function coverage, while gcovr instead indicates the level of branch coverage. gcovr can also generate Cobertura XML output, although tools exist to achieve this with lcov too. gcovr is written in Python, while lcov is a set of tools written in Perl. I won’t go through the pros and cons of each program here, but for our coverage reporting I decided to go with lcov because it seemed more mature.

lcov works similar to gcov since the former calls the latter to generate coverage information. lcov performs a range of different tasks depending on how it is called; here are the ones I found useful:

- [

lcov -c -d module/path/*.cpp.gcda -b /path/to/build/dir/ --no-external -o coverage.info]

Bundles together the named.gcdafiles into an lcov.infofile. The-bflag specifies the base workspace path (should be the build workspace path) which is stripped from file paths output bygcovwhere necessary. The--no-externalflag works similarly to the-rflag ingcov, excluding coverage information for files outside the workspace. The-dargument can be specified multiple times to add more files to the bundle. The-oflag specifies the output file name; if not specified, output will be sent to stdout. - [

lcov -e coverage.info '**/*.cpp' -o coverage-filtered.info]

The-eor--extractflag opens an existing.infofile generated by a previous invocation oflcovand outputs the coverage information for all files that match the specified shell wildcard pattern. - [

lcov -r coverage.info '**/*.h' -o coverage-filtered.info]

The-ror--removeflag opens an existing.infofile and outputs the coverage information for all files that do not match the specified shell wildcard pattern. - [

lcov -a coverage1.info -a coverage2.info -o coverage-all.info]

Merges the.infofiles specified by each-aflag into a single.infofile specified by the-oflag. - [

genhtml -o coverage -t "Unit Test Coverage" coverage-all.info]

Generates an html coverage report within the directory “coverage”. The-tflag sets the title of the report, which is otherwise the name of the input file(s).genhtmlcan be passed more than one input file, so it isn’t necessary to merge.infofiles together before generating a report.

Using these commands, the report generation process for our codebase looks like this:

- Run the instrumented test executables.

- Copy the

.gcdafiles into the correct paths where the corresponding.gcnoand.ofiles are located. lcov -cover each module directory to generate a.infofile for each module.lcov -ato merge the module.infofiles together.lcov -rto remove unwanted files such as/usr/include/*.genhtmlto generate the HTML report.

This process worked almost flawlessly, except for one hiccup: lcov didn’t include any coverage information for files which weren’t run at all as part of the test executables, i.e. files with 0% coverage. This artificially inflated the overall coverage results. This is in fact the default behavior of lcov, and it wil delete .gcov files that show a file was not run at all. In my case the problem was deeper, as I discovered gcov wasn’t even generating .gcov files for source files that weren’t executed because of a bug in the version of gcov that ships with GCC 4.8.

Fortunately lcov can skirt around this by generating 0% coverage files for any file that has a corresponding .gcno file, which is all files compiled as part of the build. The lcov -c -i command works similar to the lcov -c command, but instead of generating .info files with coverage information, it produces .info files that show 0% coverage for all files that were included in the build. These “empty” .info files can then be combined with the “full” .info files using lcov -a. The results generated by lcov -c -i act as a baseline, so the full results supplement these 0% baselines, giving coverage for all files, even those that weren’t run during testing.

Integrating coverage results into Bamboo

Bamboo has some built-in support for displaying coverage metrics, but only through Atlassian’s (now open source) Clover coverage tool. The catch? Clover only supports the Java and Groovy languages. Luckily a script to convert gcov results to a Clover XML representation exists, created and maintained by Atlassian.

To get your coverage results showing up in Bamboo with nice features like historical charts and summary dashboards, simply generate .gcov files for your codebase as described in the earlier parts of this post, then run ./gcov_to_clover.py path/to/gcov/files/*.gcov. This will generate an XML file called clover.xml which can be integrated into your build by activating Clover coverage for that build plan. More detailed instructions are available in the Atlassian Bamboo documentation.

Taking this faux-integration one step further, it is also possible to integrate the HTML coverage report generated by lcov or gcovr into Bamboo to replace the “Clover Report” link on the Clover tab of each build. Simply make sure the HTML report is output to the directory target/site/clover in the build workspace and saved as a build artifact. That’s it! Bamboo will do the rest, and make the result accessible at one-click from the build coverage summary page.

Happy Christmas to all, and to all a good night!

Implementing coverage for our C++ codebase was a surprisingly intricate process. There are plenty of options available for generating coverage for C++ code, but I found the lack of direct support for any of these in Bamboo to be disappointing. Even the built-in integration for Clover seems to be a little neglected. Having information about code coverage for our tests gives us a better idea of where to spend effort to improve them in the future. It also makes it much easier to create comprehensive tests and suites the first time around. The real value in this feature will be unlocked for our team when this coverage information also includes our integration tests, which should cover a much larger proportion of our codebase.

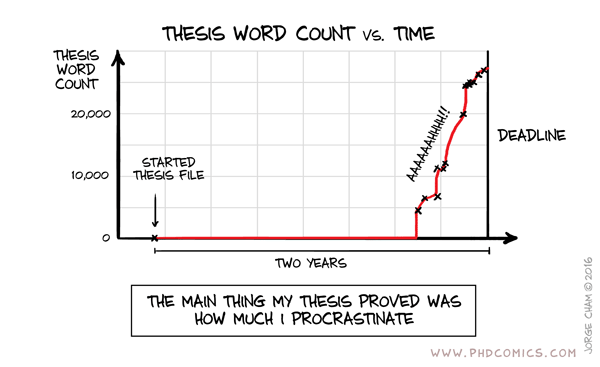

It’s taken significantly longer than I first planned to write these relatively small experiments up into blog posts. However, doing so has both helped solidify some of this knowledge in my mind, as well as giving me an opportunity to look at the process from a more objective angle. I rushed into the implementation of both of these features due to limited time available: in doing so I missed some tools and opportunities that came up while I was researching for these posts. Likewise, writing these posts was much more challenging after-the-fact when I had to rediscover references or try and remember the justification behind certain decisions or some technical details.

So, was it worth spending a large chunk of my “holiday” time (plus a large part of january) executing this work and writing it up? The process of converting the knowledge gained during this process into a blog post has certainly been valuable. Having this knowledge somewhere I can refer to it easily will save me time in the future when trying to recall it or share it with others. It was certainly worth implementing these changes for our build system, but:

- I still had to get the changes code reviewed and make some minor updates to get them through, so there was still some cost to the team as a whole to implement these changes.

- Management let me proceed with integrating the changes, but because this work had sidestepped our normal backlog planning process I think there were some lingering questions about why this work had even been done.

- The rest of my team had mixed feelings about this work. Almost everyone on the team recognized the value of both of these features, but there was also some resentment about sidestepping process to get this work done. More worrying, there was also some resentment created because other people on the team felt that it would encourage management to expect unpaid overtime from the whole team.

Given the political consequences of carrying out this sort of independent initiative, I wouldn’t do exactly the same thing again next year, at least not at my current company. Instead I would try and get buy-in from the wider team to ensure there’s less blowback when integrating the changes with the rest of the team’s work. Ideally the work would just be done on company time, or at least on paid overtime. If I still wanted to do something outside of the team’s agreed objectives, then I would probably just make the changes as part of an open source project, possibly of my own creation.

Perhaps next year I’ll just take a proper holiday instead, maybe on a tropical beach with no Wifi :)